Object recognition is used for a variety of tasks: to recognize a particular type of object (a moose), a particular exemplar (this moose), to recognize it (the moose I saw yesterday) or to match it (the same as that moose).

Visual recognition seems effortless but it is actually extremely difficult. Is difficult because the images of an object differ hugely as the exemplar, viewpoint or other viewing condition is varied.

One of the impressive accomplishments of visual recognition is that we can recognize an object from many different viewpoints, even though the images of that object are very different from one another. We can also very rapidly catagorize an object (e.g., as a duck) even though there are many different kinds of ducks including drawings of ducks and rubber ducks.

even if you have never seen them before.

Recognition normally works well in spite of variations in lighting, shading, reflection, shadows, etc.

Prosopagnosia (face blindness)

.

Check out Bill's face blindness home page. Bill didn't realize he was face blind until age 49, but relates lots of anecdotes from his early life suggesting that he was born with it. He remembers when he was 6 years old at the movies, telling a friend that it was really dumb for the bank robbers to cover only their faces because you could still see all the rest of them. He passed his mom walking on the street one day and didn't notice her. He was in the Navy for four days and freaked out because he couldn't tell anyone apart, given that they all had the same haircuts and wore the same uniforms.

Prosopagnosia is more common than previously believed, affecting

about 2.5% of the population. In other words, you probably know

somebody who suffers to some degree from face blindness. An

article about face blindness appeared in the New York Times on July

18, 2006. Similar articles have appeared in The

Times, Time

Magazine, The

Boston Globe, and The

Economist. There is a website (www.faceblind.org) devoted

to research prosopagnosia and face perception.

People don't usually just have a problem with only faces. Rather, it's often recognition tasks that involve distinguishing between similar objects that are all in the same catagory (e.g., birds, flowers), a point that we'll come back to later

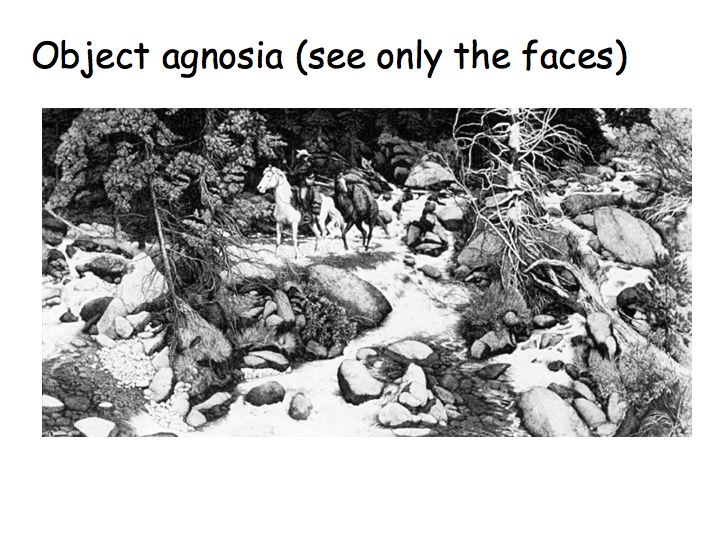

Object agnosia: Morris Moscovich studied a patient with object agnosia (that couldn't recognize common objects), but had no trouble with faces.

This patient can describe pictures (like those above) verbally but cannot name them correctly. Download an example description by someone with visual (object) agnosia (3 Mb mpeg).

Visual agnosia is not that uncommon; it affects a number of people who suffer a stroke to visual areas of the brain. What was remarkable about the Moscovich's report is that the patient failed to recognize objects but could still easliy recognize faces. It takes a long time for us to find the 13 faces hidden in above picture (by Doolittle). But this patient sees only the faces (not the objects), and can point them all out immediately.

Double dissociation: some patients show a specific deficit for recognizing faces, other subjects show deficits for recognizing all other objects. This suggests that faces and objects are processed in separate, perhaps non-overlapping, brain areas.

Face inversion effect: This suggests that upright faces are processed differently.

Turn the page upside-down (or stand up and turn your head upside-down if you are working at a computer screen) so that the faces are now rightside-up... You don't see upside-down faces in the usual way.

Careful experiments support this demo that rightside-up faces and upside-down faces are processed differently. In a sequential matching task, on each trial subjects were shown an unfamilar face, followed by brief interval, and then followed by second face. Subject responded "same'' or "different''. There were two key results:

Each panel plots the response of an IT neuron (firing rate over time) in response to each of the pictures. Top row:

Bottom row:

Functional (columnar) architecture for faces: Tanaka used optical imaging in IT to demonstrate columnar organization.

The figure below shows images of the same cortical area obtained for five different views of a doll face. Systematic movement of the (dark) activation spot with rotation of the face. Colors at the bottom show outlines of the activation spots for several different experiments (in different brains or hemispheres). Through this and other experiments, this supplies evidence that neurons in nearby columns respond to similar complex feature patterns.

fMRI and face selectivity: One human brain area responds most to faces. A neighboring brain area responds most to pictures of buildings and familiar places. Yet another brain area responds to pictures of objects that are neither faces nor places.

This figure is from a study in which subjects viewed pictures of faces in comparison to non-face pictures. Left: slices through each subject's brain showing a region in the ventral temporal lobe that responds to faces. Right: Amplitude of brain activity (numbers) in the "face area" in response to each picture. The response is greater for faces than for places (compare the two pictures outlined in red).

This figure is from a study in which subjects viewed pictures of buildings and familiar places. Left: slices through each subject's brain showing a region in the ventral temporal lobe that responds to faces. Right: Brain activity in the "place area". The response is greater for places and buildings than for other objects. The conclusion from these (and a number of other) fMRI experiments is that faces and objects are processed in separate brain areas, separated by several centimeters.

Role of expertise: Mike Tarr had subjects practice for many weeks to learn to make fine discriminations in a novel category of objects, that Tarr called Greebles. The Greebles had names (which the subjects learned), genders, and families.

At first, performance was like that for objects, e.g., no inversion effect. After lots of practice, performance was like that for faces, i.e., better at recognizing upright greebles.

Tarr followed up on this with an fMRI study. The left two columns are from before practice: when subjects viewed pictures of greebles there was no activity in the "face" area (small white outline). The right two colums were made after lots of practice: the brain activity shifted to the "face" area so that the brain activity was very similar for Greebles and for faces.

Human single-neuron electrophysiology: Patients with severe epilepsy are often studied in the hospital for a period of several days to carefully locate the abnormal brain tissue that is causing seizures, before removing any brain tissue. These patients undergo surgery to have some electrodes implanted in their brain to record the electrical activity of the brain. In some cases, it is possible to record action potentials from individual neurons. Then they sit in a hospital bed waiting for seizures to occur. When the seizures do occur, the electrical measurements from the implanted electrodes help to locate the diseased tissue very precisely. Once the location has been determined, the patients undergo another operation to have the electrodes removed along with the diseased brain tissue that is causing the seizures. While in the hospital with the electrodes implanted, in between seizures, some of these patients have agreed to participate in perceptual and cognitive neuroscience experiments. Hence, there are a handful of reports in the neuroscience journals of the responses of individual neurons in human brains.

Below is an example of the data from such an experiment. This particular neuron was in the medial temporal lobe and responded very selectively to pictures of Jennifer Aniston. Each panel shows a picture and below that is a graph of the neuron's response to that photo (firing rate over time). The two vertical dashed lines in each graph indicate when the photo was presented in time. The patient was shown hundreds of photos but the neuron responded only to Jennifer. It responded to many different photos of Jennifer, but not Jennifer when she was photographed with Brad Pitt. It appears to be the "Jennnifer Aniston" cell, the neuron in the brain that is responsible for recognizing Jennifer. An important caveat, however, is that this part of the brain is known to be involved in memory. This particular neuron be activated by some memory that is triggered by the photo (perhaps the memory of a "Friends" episode), and may have nothing per se to do with the recognition.

Summary

Evidence for brain areas that are functionally specialized for face recognition:

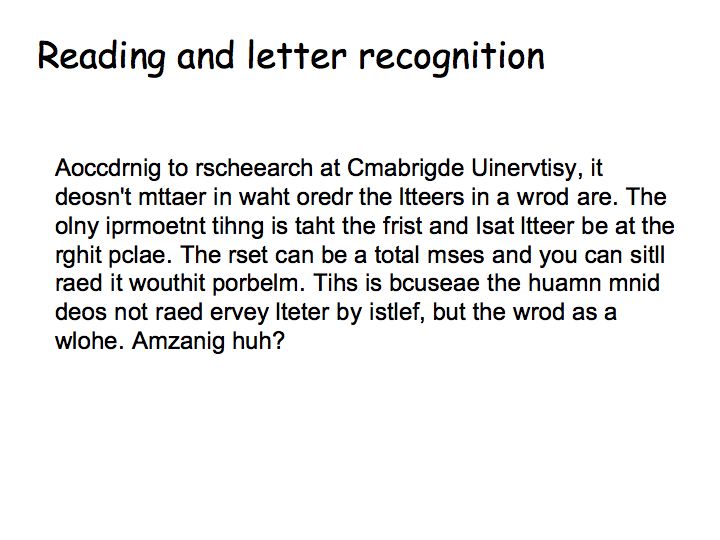

Reading and Letter Recognition

Letter recognition is, like face recognition, believed to be handled by special-purpose circuitry in the brain.

Recognition by parts: Biederman suggested that recognition works by decomposing objects into a fixed set of primitive shapes (Biederman suggests that there are 36 such primitives), that he calls geons.

Observe that many objects can be constructed by combining just a few of these primitives. The idea is that we first recognition each of the constituent geons and then the spatial relationship between them.

What is the evidence for Biederman's theory? One example is that we can no longer identify an object when we occlude the object's geons by obscuring their intersections. Do you find this compelling?

View-dependent recognition: This is an alternative theory. You store in your head a bunch of characteristic views (mental images) of objects. You recognize a new image by finding the closest match. That is, you don't use 3D shape to recognize objects. Only the 2D views of the objects

Each panel shows different views of the same objects. It's hard to see that they are all the same. Bülthoff/Edelman did an experiment to quantify this. They trained subjects on one set of views (like one of the panels above) of each object. After training, subjects looked at similar views of the training objects, different views of the training objects, and images of totally different objects. The task was simply to report on each trial if it was one of the training objects. Performance was good on similar views to those used in training, but quite bad for very different views.

Recognition in the Brain: Logothetis trained monkeys in an object recognition task like the Bülthoff and Edelman experiment (show object rotating a bit, test recognition of that object for similar views, different views, and distractor objects).

Top panel: View-selective responses were found in IT. Insets show many views of the same target. Firing rate reached a maximum for presentation of one particular object view (indicated by the box), and declined as the object was rotated away from this preferred view.

Bottom panel: Responses to distractors are much lower, even when the distractors contain features that are similar to the target, e.g., inverted V's indicated by dashed circles.

The conclusion from these studies and many others like them is that object recognition is viewpoint dependent.

Segmentation: divide the visual field into distinct parts/objects. Grouping: integrate visual information across different patches (fill in the missing parts). You can't recognize an object until it is properly grouped/segmented.

Sometimes boundaries/edges are not evidenced by a simple change in retinal image intensity/color, even in real scenes. So the visual system must make an unconscious inference to "complete" the missing bits of the boundaries.

Some V2 neurons respond to illusory contours. In this example, the neuron responds to the orientation of the illusory contour, not the orientation of the real lines that define it. Each white dot in the graphs represents a spike. Each row of the graph plots neural activity over time for a particular stimulus orientation. Orientation varies from row to row. There were lots of spikes for the vertically oriented line (right panel) and lots of spikes for the vertical illusory contour in (left panel). Note: V1 neurons don't respond in this way. Rather, V1 neurons always respond to the orientation of the real lines, ignoring the orientation of the illusory contour.

Responses to illusory contours have also been measured in the human brain with fMRI.

Grouping and crowding:

Fixate on the plus sign at the top right and you have no trouble recognizing the "D". But fixate on the minus sign at the bottom right and you have great difficulty recognizing any of the 3 letters. It looks as if the features from each of the letters get scrambled with those from the other letters. This is called crowding. To explain crowding... [More Here]

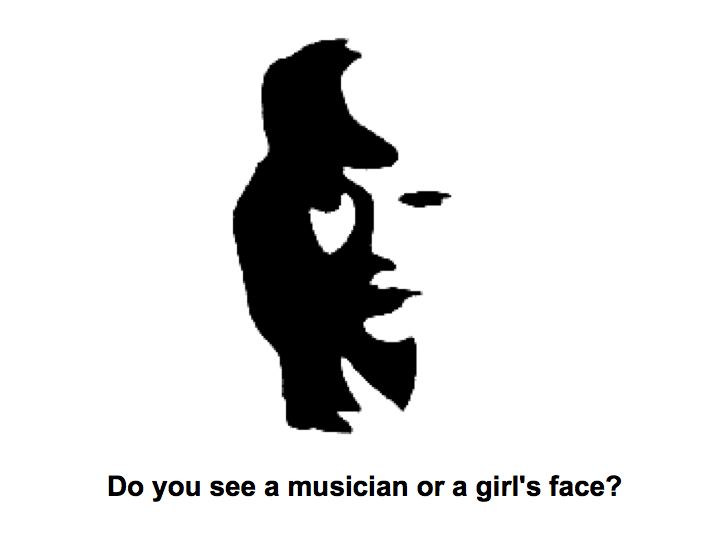

The visual system sometimes struggles (trying multiple groupings and segmentations) to perceptually organize a stimulus, to figure out which of the features belong together (grouping) and which of them do not, resulting in a dynamic percept in which different organizations compete with one another.

Grouping is based on a number of visual features, first described

by

the Gestalt psychologists and known as the Gestalt laws. These

laws

include:

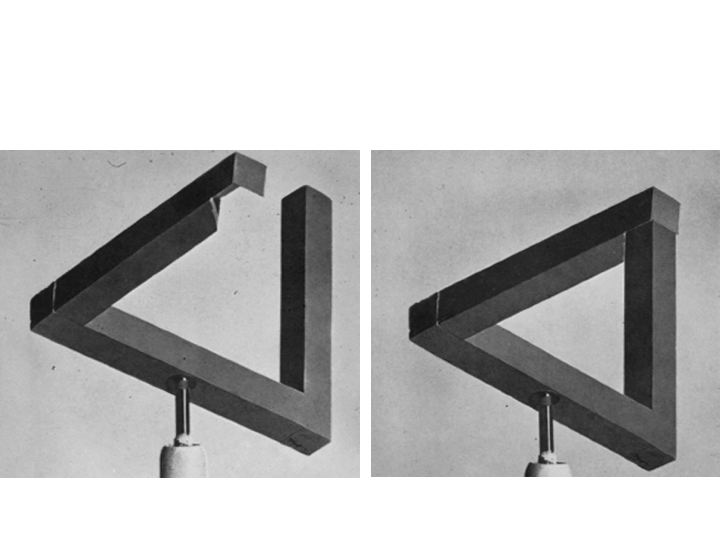

Impossible objects are images that have no globally consistent interpretation. Usually, impossible objects are just drawings with no consistent interpretation. But, one can construct real physical "sculptures" that when viewed from a certain angle look like impossible objects.

Many visual illusions rely on tricks with perceptual organization (segmentation, grouping, figure versus background, multiple possible perceptual organizations, impossible figures, etc.):